Growing use of AI in design and inspection workflows introduces internal security risks, as improper tools may expose sensitive data and compromise compliance.

Growing use of AI in design and inspection workflows introduces internal security risks, as improper tools may expose sensitive data and compromise compliance.

Security has become a major issue for the industry over the past couple decades. Long gone are the times when information could be shared without concern that it would be intercepted, copied or stolen during the course of “normal” operations. Communications technology has changed and evolved, and new threats have emerged. The regrettable result is that security is front-and-center of any company’s day-to-day concerns.

As the need for tighter and more comprehensive security has heightened, most companies have invested significantly in updating ERP and design software, installing firewalls and security software to protect databases, email and other communication tools. Many have invested in compliance with NIST-800-272, the current benchmark for IT, cybersecurity and facility physical security, and many are certified to IPC-1791 or in the process of becoming certified to CMMC. All these protocols are excellent, albeit extremely difficult and expensive – and, as many have grumbled, perhaps a bit of overkill.

Meanwhile, at this point, between the wars in Ukraine and the Middle East, I expect that many industry companies have heightened concern over security measures. Indeed, belligerents have used cyberattacks on companies in enemy countries, even companies not directly related to the war effort, as a weapon to destabilize and create collateral damage. These concerns are real and need to be taken seriously, especially for our technology-centric industry. While real security risks from belligerent countries waging war are concerning, so is the enemy within.

That enemy within may have nothing to do with global conflict. Rather, it comes from a desire to improve one’s company by utilizing and harnessing the latest technology. And that technology is AI. Simply put, the efforts to harness and utilize AI to improve efficiency and productivity could inadvertently put a company at risk.

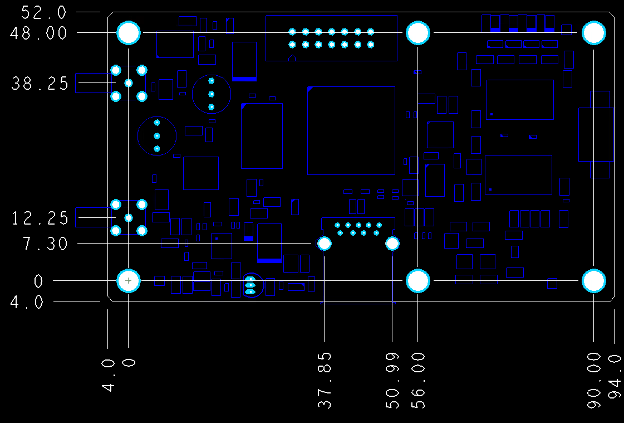

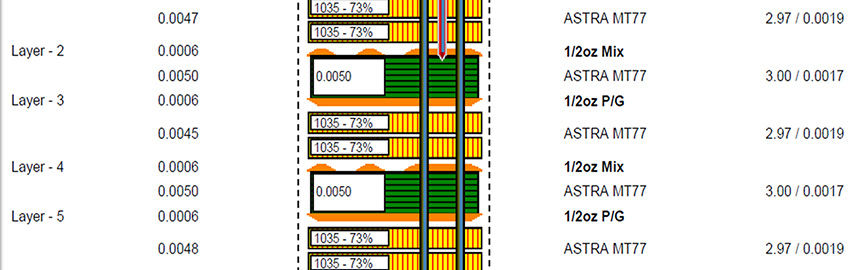

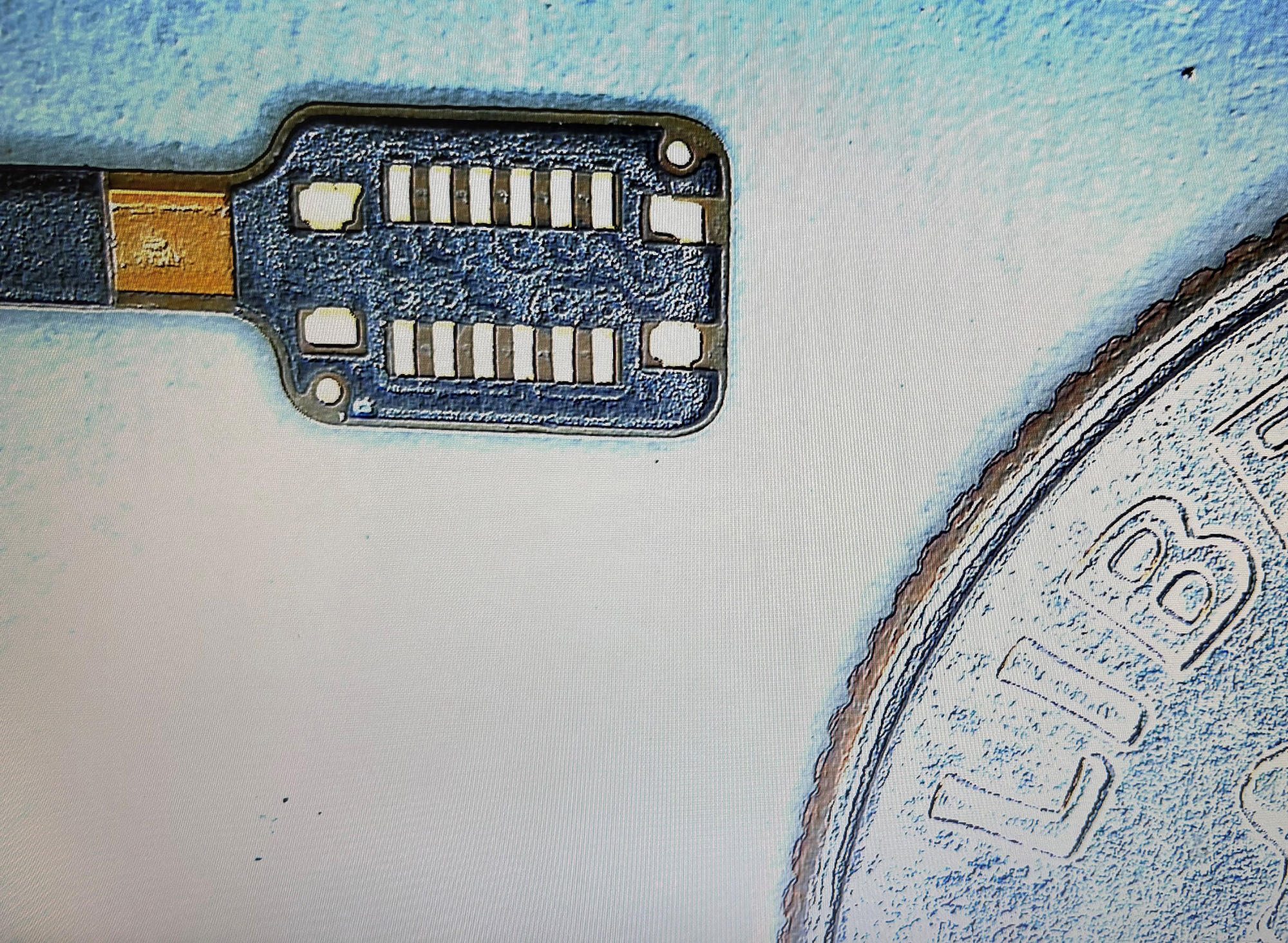

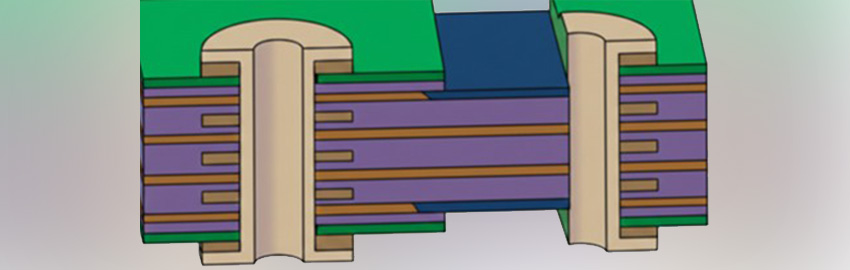

How could this be? The two most vulnerable areas to look at are design and inspection. In design, an overzealous manager wants to decrease the time to develop a product or to release the prints. To accomplish this, the manager determines that their designers should “design” but AI should review and verify; in short, “button-up” files before they are released to the shop floor and suppliers. Meanwhile, the quality manager wants to increase the throughput of FAIs (first article inspections) and determines that AI may be able to do the tasks in less time than staff can accomplish. In both cases, managers have identified bottlenecks and a potential solution, and set off to make it happen. But this is where the wheels could come off, and security could be irreparably damaged.

In the interest of time, the managers begin playing with readily available AI software. They commit time and effort to develop the tools they need to accomplish their goal of improving workflows. However, they are using AI software that takes their IP and CUI (controlled unclassified information) and puts it in the public domain – a direct violation of ITAR. Voilà! Employees trying to make an improvement inadvertently become the enemy within!

All the investment into IT and cybersecurity, then, is compromised by otherwise dedicated employees who find an application they believe will offer value-added improvement but in practice opens the back door that compromises company security, and potentially customer and supplier security as well.

AI offers phenomenal potential for process improvement opportunities. Rather than fearing AI, all employees should be looking at how it can assist. But due diligence regarding the choice of AI platform and how it is deployed must be exercised to secure and protect against input data becoming part of the public domain or held on an insecure, easily hackable platform.

Commitment to security, for a business, their suppliers and customers, as well as individual employees, requires continued dedication. World events catch our attention and cause every executive and manager to pause, be concerned, and focus on what could happen. Sometimes, especially with new technologies such as AI, inward focus is equally important to make sure the outside risks do not displace the focus on potential inward risks.

Peter Bigelow has more than 30 years’ experience as a PCB executive, most recently as president of FTG Circuits Haverhill; This email address is being protected from spambots. You need JavaScript enabled to view it..

Growing use of AI in design and inspection workflows introduces internal security risks, as improper tools may expose sensitive data and compromise compliance.

Growing use of AI in design and inspection workflows introduces internal security risks, as improper tools may expose sensitive data and compromise compliance.