TAIPEI – AI server shipments in the second quarter will increase by nearly 20% quarter over quarter, and the annual shipment forecast of 1.67 million units represents year-over-year growth of 41.5%, according to TrendForce's latest industry report.

Meanwhile, TSMC, SK hynix, Samsung, and Micron’s gradual production expansion has significantly eased shortages in the second quarter, and the lead time for Nvidia’s flagship H100 solution has decreased from the previous 40–50 weeks to less than 16 weeks, the report found.

TrendForce notes that this year, major CSPs continue to focus their budgets on procuring AI servers, which is crowding out the growth momentum of general servers. Compared to the high growth rate of AI servers, the annual growth rate of general server shipments is only 1.9%. The share of AI servers in total server shipments is expected to reach 12.2% for an increase of about 3.4 percentage points from the second quarter of 2023.

In terms of market value, AI servers are significantly contributing to revenue growth more than general servers. The market value of AI servers is projected to exceed $187 billion in 2024, with a growth rate of 69%, accounting for 65% of the total server market value.

North American CSPs (e.g., AWS, Meta) are continuously expanding their proprietary ASICs, and Chinese companies like Alibaba, Baidu, and Huawei are actively expanding their own ASIC AI solutions. This is expected to increase the share of ASIC servers in the total AI server market to 26% in 2024, while mainstream GPU-equipped AI servers will account for about 71%.

In terms of AI chip suppliers for AI servers, Nvidia holds the highest market share – approaching 90% for GPU-equipped AI servers – while AMD’s market share is only about 8%. However, when including all AI chips used in AI servers (GPU, ASIC, FPGA), Nvidia’s market share this year is around 64%.

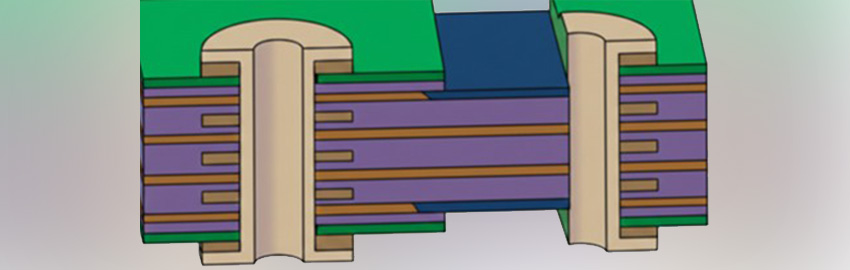

TrendForce observes that demand for advanced AI servers is expected to remain strong through 2025, especially with Nvidia’s next-generation Blackwell (including GB200, B100/B200) set to replace the Hopper platform as the market mainstream. This will also drive demand for CoWoS and HBM. For Nvidia’s B100, the chip size will be double that of the H100, consuming more CoWoS. The production capacity of major supplier TSMC’s CoWoS is estimated to reach 550,000-600,000 units by the end of 2025, with a growth rate approaching 80%.

Mainstream H100 in 2024 will be equipped with 80GB HMB3. By 2025, main chips like Nvidia’s Blackwell Ultra or AMD’s MI350 are expected to be equipped with up to 288GB of HBM3e, tripling the unit usage. The overall HBM supply is expected to double by 2025 with the strong ongoing demand in the AI server market.