As the demographics shift, will the software keep up?

What’s the single, most constant and reliable aspect of electronic hardware engineering that we all expect, dream about, wish for and depend on? Change, of course!

Change manifests itself in a multitude of ways, whether it’s having to respond to ever-increasing design complexities and challenges, higher system performance, smaller and faster devices, the need to lower product costs, a shrinking time-to-market, addressing advances in manufacturing technologies and industry trends such as the Internet of Things (IoT).

While these are all factors that affect what we as engineers need to adapt to, there is a missing variable that should be considered and that’s how we change our behavior to remain as innovative and productive as ever.

I’ve followed with interest, over the past couple of years or so, the trend in the design community of the resurgence of the “tall, thin engineer.” While this is not a new concept, particularly in the field of chip design, where a seasoned, respected and knowledgeable person assumes responsibility for many (if not all) aspects of the design flow, the characteristic requirements of that person have expanded into the more mainstream of FPGA/PCB hardware design.

Figure 1. Tall, thin engineers now deal with complex FPGA integration for synthesis and I/O optimization.

It is becoming less common for engineering teams to afford the luxury of being focused in specialist disciplines or silos, where there are dedicated teams of design engineers, specialists and PCB layout designers. Teams are forced to make tradeoffs between cost, performance (signal, power, thermal), manufacturability, form factor and reliability. This presents a scenario whereby they have to consult with multiple experts to get opinions on which to base their decisions, or make the decisions themselves. To be adequately equipped to make those decisions themselves, they need access to a wider set of technology and tools where ease of use and integration across the entire design flow is critical.

Step up the tall thin hardware engineer, now responsible for system definition, design creation, analysis and verification, FPGA design/integration and PCB layout through to hand off to manufacturing.

They are more than likely operating as individual engineers, or within small design teams, and working on prototype/project-based designs, rather than within enterprise-like operations, where innovation, creativity, self-reliance, versatility and nimbleness drive them to exceed those quality, cost and time-to-market targets.

Some of the challenges facing these engineers, who are usually budget-conscious, include not only sourcing tools, but demanding tools that have:

- Easy-to-use design creation.

- Out-of-the-box and ready-to-go libraries that adhere to industry standards (read “minimal effort to be up and running quickly”).

- Analysis and verification capabilities.

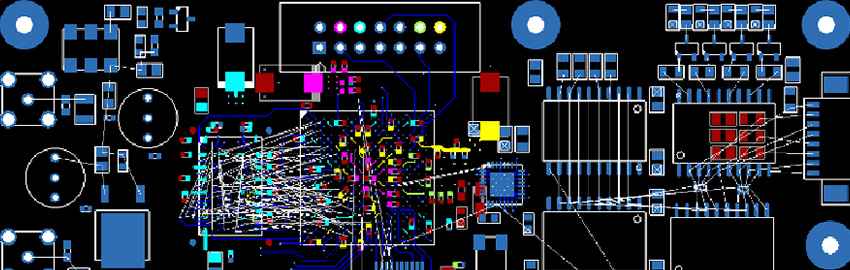

- FPGA synthesis and I/O optimization with integration at the PCB level.

- 3D visualization, integration and collaboration with MCAD.

- Design-for-manufacture checking.

- Automated fabrication outputs and assembly documentation.

The problem is, with all these different requirements, they must also understand and master this myriad of design tools when they are typically only casual users.

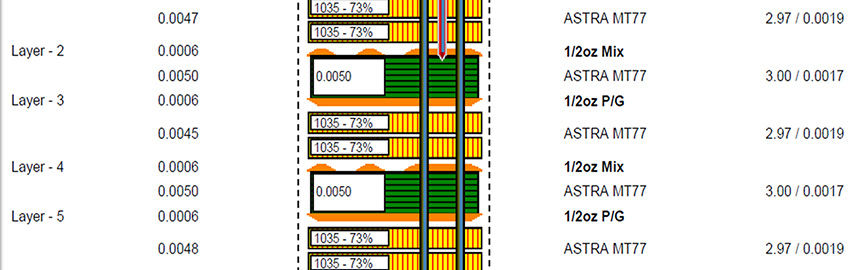

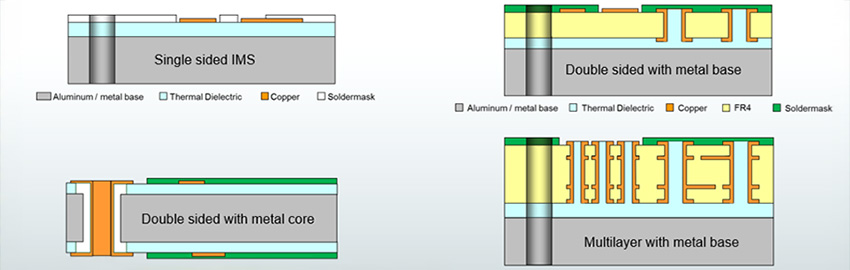

System design typically encompasses in-depth signal integrity analysis and verification, not just for simple termination strategies but also crosstalk analysis, routing and tuning high-speed protocols such as DDR4 and PCIe, power integrity analysis, thermal performance and characteristics, all to ensure that the system performs as defined and required.

Then you also have to consider the physical packaging requirements. It is well known that the MCAD and ECAD domains can no longer function in isolation, but whether through varying levels of interfacing, integration or collaboration, these traditionally detached disciplines must operate in closer harmony. Today’s tall, thin hardware engineer has neither the time nor inclination to master a toolset that is typically outside their design sphere, yet they are still responsible for the design and performance of the whole system.

We often compartmentalize design tools into “desktop” and “enterprise” solutions, where the desktop offerings cost less, are easy to use out of the box, have a very broad level of functionality that touches on many of the facets required to deliver a complete design, but also require interfaces to specialized tools for more in-depth analysis and to plug any missing gaps, such as FPGA design and analysis and verification tools. Enterprise solutions, on the other hand, are often more expensive, require internal IT resources to manage tool and design data across geographically dispersed engineering teams, are not as easy to use, but without a doubt have the best functionality, offering depth in all areas.

So the challenge is how to facilitate and enable those tall, thin hardware engineers so that they are able to get ready and affordable access to all the design tools they need to ensure that they remain innovative while simultaneously reducing the learning curves to increase productivity and retain their competitive edge.

Consolidation of design tools, rather than collaboration may be a decisive factor. Provide a solution that is affordable, yet based on the best technology that delivers both breadth and depth across the entire system design flow. Wouldn’t that be something?

Steve Hughes is digital marketing manager Mentor Graphics, Systems Design Division (mentor.com); This email address is being protected from spambots. You need JavaScript enabled to view it..