We’ve come a long way since the first 3D-printed item came to us by way of an eye wash cup, to now being able to rapidly fabricate things like car parts, musical instruments, and even biological tissues and organoids.

While much of these objects can be freely designed and quickly made, the addition of electronics to embed things like sensors, chips, and tags usually requires that you design both separately, making it difficult to create items where the added functions are easily integrated with the form.

Now, a 3D design environment from MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) lets users iterate an object’s shape and electronic function in one cohesive space, to add existing sensors to early-stage prototypes.

The team tested the system, called MorphSensor, by modeling an N95 mask with a humidity sensor, a temperature-sensing ring, and glasses that monitor light absorption to protect eye health.

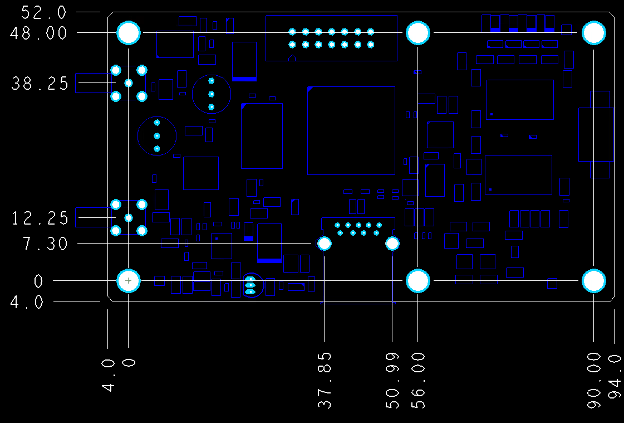

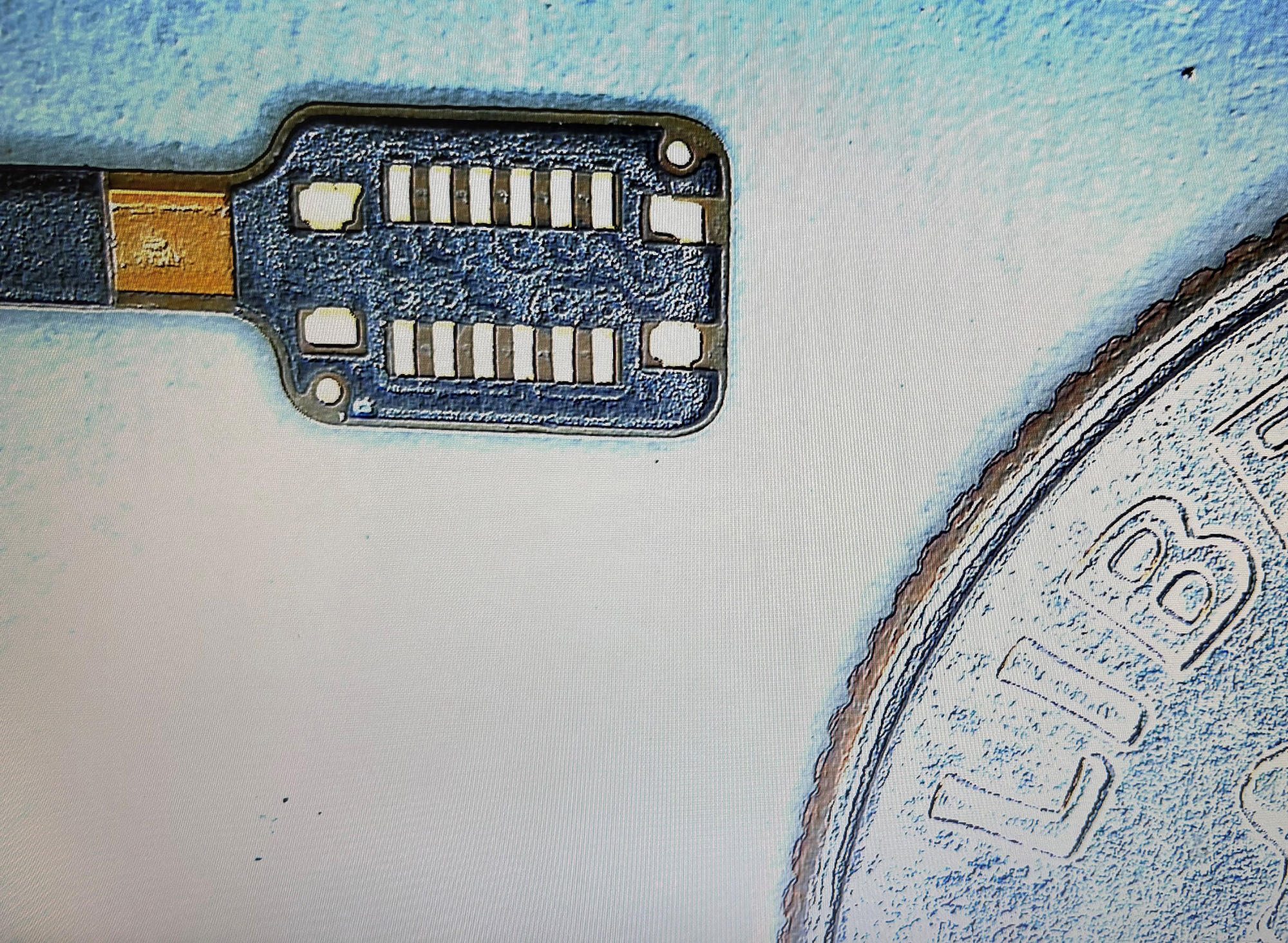

MorphSensor automatically converts electronic designs into 3D models, and then lets users iterate on the geometry and manipulate active sensing parts. This might look like a 2D image of a pair of AirPods and a sensor template, where a person could edit the design until the sensor is embedded, printed, and taped onto the item.

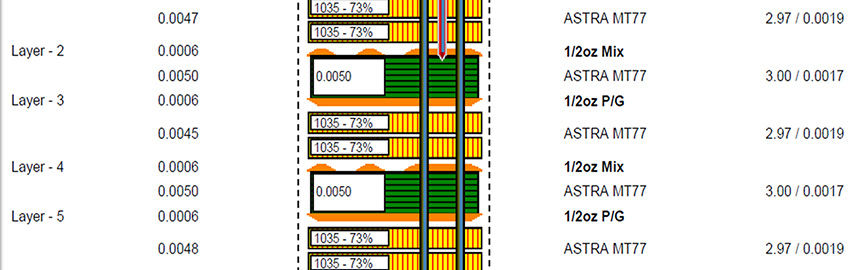

To test the effectiveness of MorphSensor, the researchers created an evaluation based on standard industrial assembly and testing procedures. The data showed that MorphSensor could match the off-the-shelf sensor modules with small error margins, for both the analog and digital sensors.

An MIT team used MorphSensor to design multiple applications, including a pair of glasses that monitor light absorption to protect eye health.

“MorphSensor fits into my long-term vision of something called ‘rapid function prototyping’, with the objective to create interactive objects where the functions are directly integrated with the form and fabricated in one go, even for non-expert users,” says CSAIL PhD student Junyi Zhu, lead author on a new paper about the project. “This offers the promise that, when prototyping, the object form could follow its designated function, and the function could adapt to its physical form.”

MorphSensor in Action

Imagine being able to have your own design lab where, instead of needing to buy new items, you could cost-effectively update your own items using a single system for both design and hardware.

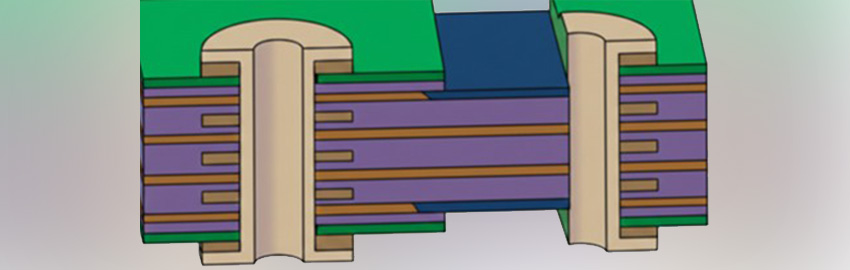

For example, let’s say you want to update your face mask to monitor surrounding air quality. Using MorphSensor, users would first design or import the 3D face mask model and sensor modules from either MorphSensor's database or online open-sourced files. The system would then generate a 3D model with individual electronic components (with airwires connected between them) and color-coding to highlight the active sensing components.

Designers can then drag and drop the electronic components directly onto the face mask, and rotate them based on design needs. As a final step, users draw physical wires onto the design where they want them to appear, using the system’s guidance to connect the circuit.

Once satisfied with the design, the "morphed sensor" can be rapidly fabricated using an inkjet printer and conductive tape, so it can be adhered to the object. Users can also outsource the design to a professional fabrication house.

To test their system, the team iterated on EarPods for sleep tracking, which only took 45 minutes to design and fabricate. They also updated a “weather-aware” ring to provide weather advice, by integrating a temperature sensor with the ring geometry. In addition, they manipulated an N95 mask to monitor its substrate contamination, enabling it to alert its user when the mask needs to be replaced. (Click the link to watch a video of the MorphSensor in action.)

In its current form, MorphSensor helps designers maintain connectivity of the circuit at all times, by highlighting which components contribute to the actual sensing. However, the team notes it would be beneficial to expand this set of support tools even further, where future versions could potentially merge electrical logic of multiple sensor modules together to eliminate redundant components and circuits and save space (or preserve the object form).

Zhu wrote the paper alongside MIT graduate student Yunyi Zhu; undergraduates Jiaming Cui, Leon Cheng, Jackson Snowden, and Mark Chounlakone; postdoc Michael Wessely; and Professor Stefanie Mueller. The team will virtually present their paper at the ACM User Interface Software and Technology Symposium.

This material is based upon work supported by the National Science Foundation.

This article was first published in MIT News and is republished here with permission of Rachel Gordon and MIT.